NVIDIA ConnectX-7 VPI Adapter ️ NDR مزدوج الموانئ 400Gb / s ، PCIe 5.0، GPUDirect ، RoCE MCX75310AAS-NEAT

تفاصيل المنتج:

| اسم العلامة التجارية: | Mellanox |

| رقم الموديل: | MCX75310AAS-NEAT (900-9X766-003N-SQ0) |

| وثيقة: | Connectx-7 infiniband.pdf |

شروط الدفع والشحن:

| الحد الأدنى لكمية: | 1 قطعة |

|---|---|

| الأسعار: | Negotiate |

| تفاصيل التغليف: | الصندوق الخارجي |

| وقت التسليم: | على أساس المخزون |

| شروط الدفع: | تي/تي |

| القدرة على العرض: | العرض بواسطة المشروع/الدفعة |

|

معلومات تفصيلية |

|||

| رقم الموديل: | MCX75310AAS-NEAT (900-9X766-003N-SQ0) | الموانئ: | منفذ واحد |

|---|---|---|---|

| تكنولوجيا: | إنفينيباند | نوع الواجهة: | OSFP56 |

| مواصفة: | 16.7 سم × 6.9 سم | أصل: | الهند / إسرائيل / الصين |

| معدل الإرسال: | 400GBE | الواجهة المضيفة: | Gen3 X16 |

| إبراز: | محول شبكة NVIDIA ConnectX-7,بطاقة PCIe مزدوجة الموانئ NDR 400Gb / s,مكيّف ميلانوكس RoCE GPUDirect,Dual-Port NDR 400Gb/s PCIe card,Mellanox RoCE GPUDirect adapter |

||

منتوج وصف

MCX755106AS-HEAT.

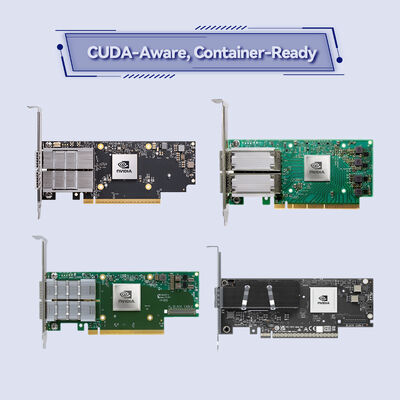

تسريع الذكاء الاصطناعي، الحوسبة العلمية، وتحميل أعمال السحابة المؤسسية مع عائلة NVIDIA ConnectX-7. MCX755106AS-HEAT يوفر ما يصل إلى200 جيجابايت / ثانية InfiniBand (HDR)و 200GbE مرونة إيثيرنت، محركات الحوسبة في الشبكة، والأمن على مستوى الأجهزة، والبطء المنخفض للغاية جميعها مدعومة من PCIe 5.0.

محول NVIDIA ConnectX-7 VPI MCX755106AS-HEAT هو بطاقة واجهة شبكة ذكية مزدوجة الموانئ 200Gb / s مصممة لمجموعات الحوسبة عالية الأداء (HPC) ، مصانع الذكاء الاصطناعي ،ومراكز بيانات المؤسساتيجمع بين دعم بروتوكول InfiniBand و Ethernet ، ويمكنه الوصول إلى الذاكرة المباشرة عن بعد (RDMA) ،ومحركات الحوسبة المتقدمة في الشبكة مثل SHARPv3 و rendezvous offloadمع واجهة المضيف PCIe 5.0 ومسرعات الأمان القائمة على الأجهزة، هذا المحول يزيل الحمولة من وحدة المعالجة المركزية، ويقلل من التكلفة الإجمالية للعمليات، ويقدم أداء ثابت مع تأخير منخفض.

مثالية للمؤسسات التي تقوم بتحديث البنية التحتية لتكنولوجيا المعلومات من الحافة إلى الأساس، عائلة ConnectX-7 تجلب البرمجيات المحددة،والسلامة تمكين حلول قابلة للتطوير وآمنة مع الحد الأدنى من التكاليف العامة.

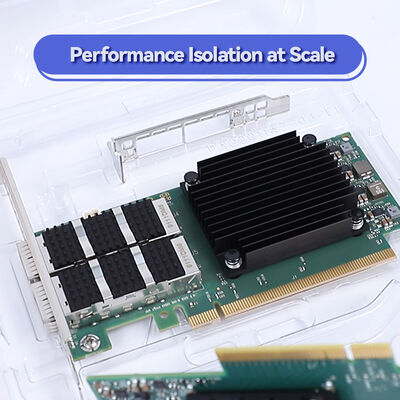

يدمج ConnectX-7 تقنية NVIDIA ASAP2 (التبديل المتسارع ومعالجة الحزم) لتقديم الشبكات المحددة برمجيًا بمعدل الخط دون استهلاك نواة وحدة المعالجة المركزية.محركات الأجهزة الداخلية تتعامل مع تشفير / فك تشفير IPsec، TLS ، و MACsec ، حماية البيانات في الحركة من الحافة إلى النواة. للتخزين ، فإن NVMe-oF offload المدمجة وتخزين GPUDirect تمكن حركة البيانات المباشرة بين التخزين وذاكرة GPU ،الحد من فترة الكمون وتعظيم الناتجكما يدعم المحول مزامنة الوقت المتقدمة (PTP بدقة 12ns) والتبريد حسب الطلب (ODP) لـ RDMA الخالية من التسجيل ،مما يجعله مثاليًا للعمارات الممزقة والمركزة على الذاكرة.

- تجمعات AI & Large Language Model (LLM):اتصال عالي السرعة لخوادم GPU، الاستفادة من GPUDirect RDMA و SHARP التحميل الجماعي.

- الحوسبة عالية الأداء (HPC):200Gb / s HDR InfiniBand نسيج MPI، OpenSHMEM، والمحاكاة العلمية.

- مركز بيانات السحابة و SDN:RoCEv2، تسريع التغطية، و SR-IOV لتحقيق الافتراضية متعددة المستأجرين.

- بوابة أمن المؤسسةتشفير MACsec / IPSec في الخط للاتصالات من الحافة إلى النواة مع إزالة حزمة الأجهزة.

- أنظمة التخزينNVMe-oF/TCP offload، منصات تخزين موزعة تتطلب تأخيرًا منخفضًا للغاية وIOPS عالية.

✅ أنظمة التشغيل:برامج تشغيل داخل الصندوق لـ Linux (RHEL ، Ubuntu) ، ويندوز سيرفر ، VMware ESXi (SR-IOV) ، Kubernetes (ملحقات CNI).

✅ البروتوكولات:InfiniBand (HDR/EDR) ، Ethernet (200GbE إلى 10GbE) ، RoCE، RoCEv2، iSCSI، NVMe-oF، SRP، iSER، NFS عبر RDMA، SMB Direct.

✅ HPC الوسيط:NVIDIA HPC-X، UCX، UCC، NCCL، OpenMPI، MVAPICH، MPICH، OpenSHMEM.

✅ الإدارةNC-SI، MCTP عبر PCIe/SMBus، PLDM، Redfish، SPDM، تحديث برنامج ثابت آمن.

| المواصفات | تفاصيل |

|---|---|

| نموذج المنتج | MCX755106AS-HEAT(NVIDIA ConnectX-7 VPI) |

| السرعة القصوى | InfiniBand HDR 200Gb/s؛ إثنر حتى 200GbE |

| تكوين الموانئ | منفذ مزدوج (يدعم 1/2 البوابة المتغيرات، هذا النموذج QSFP56 منفذ مزدوج) |

| واجهة المضيف | PCIe 5.0 x16 (حتى 32 مسارًا مع التقسيم / Multi-Host) |

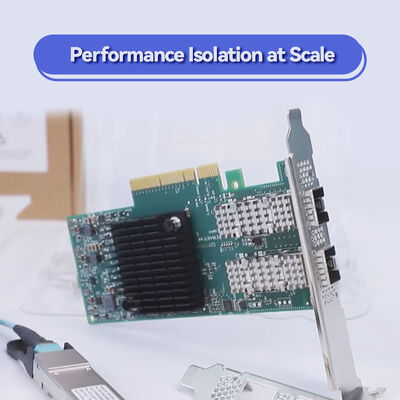

| عامل الشكل | PCIe HHHL (نصف الارتفاع ونصف الطول) |

| دعم البروتوكول | إنفيني باند (HDR/EDR) وإيثيرنث (200GbE/100GbE/50GbE/25GbE/10GbE) |

| RDMA | RoCE، RoCEv2، النقل الموثوق بهاردو، DCT، XRC، التصفح حسب الطلب (ODP) |

| أمان التحميل | IPsec/TLS/MACsec في الخط (AES-GCM 128/256-bit) ، بدء تشغيل آمن، تشفير فلاش، شهادة الجهاز |

| التخزين التفريغ | NVMe-oF (TCP/Fabrics) ، NVMe/TCP، T10-DIF، مستوى الكتل XTS-AES 256/512 بت |

| توقيت و مزامنة | IEEE 1588v2 (PTP) ، دقة 12ns، SyncE (G.8262.1) نظام الطاقة الكهربائية القابل للتكوين، الجدول الزمني |

| الافتراضية | SR-IOV، تسريع VirtIO، تحويل الحمل (VXLAN، جنيف، NVGRE) |

| الميزات المتقدمة | GPUDirect RDMA ، GPUDirect Storage ، SHARP Offload ، التوجيه التكيفي ، Burst Buffer Offload |

| الإدارة والإطلاق | UEFI، PXE، iSCSI البدء، InfiniBand البدء عن بعد، PLDM، Redfish، SPDM، MCTP |

*تستند المواصفات إلى الوثائق العامة لـ NVIDIA. تحقق من التكوين الدقيق لنظامك قبل الطلب.

| النموذج | الموانئ / السرعة | واجهة المضيف | الهدف الرئيسي |

|---|---|---|---|

| MCX755106AS-HEAT | 2 منفذ HDR 200Gb / s InfiniBand / 200GbE | PCIe 5.0 x16 | تجمعات الذكاء الاصطناعي، الحوسبة العالية، مراكز بيانات المؤسسات |

| MCX75310AAS-NEAT | 2 منفذ NDR 400Gb / s InfiniBand | PCIe 5.0 x16 | الذكاء الاصطناعي الراقي، الحوسبة العالية النطاق |

| متغيرات OCP 3.0 | SFF / TSF مع HDR / NDR | PCIe Gen5 | خوادم مشروع الحوسبة المفتوحة |

- فترة تأخير منخفضة للغاية وسرعة عالية:أجهزة RDMA والحوسبة في الشبكة تقلل من تأخر الذيل التطبيقي.

- نسيج موحد:يدعم محول واحد كلاً من InfiniBand و Ethernet ، مما يسهل المخزون والتنفيذ.

- مؤكد للمستقبل PCIe 5.0:32 GT / s لكل ممر، عرض النطاق الترددي المزدوج من PCIe 4.0إزالة اختناقات الإدخال والخروج.

- تخفيض التكلفة الكلية للاستخدام:يقوم بتفريغ وحدة المعالجة المركزية من مهام الشبكات والتخزين والأمن، مما يتيح استخدام أكثر كفاءة للخادم.

- محسّنة الذكاء الاصطناعي:العمليات الجماعية الأصلية GPUDirect و SHARPv3 تسريع تدريب النموذج والاستنتاج.

توفر شركة Hong Kong Starsurge Group Co.، Limited دعمًا من نهاية إلى نهاية بما في ذلك الاستشارات قبل المبيعات وتكوين البرمجيات الثابتة المخصصة والشحن في جميع أنحاء العالم.جميع محولات ConnectX-7 مدعومة بضمان مدته سنة واحدة (يمكن تمديدها) والمساعدة التقنية من مهندسي الشبكات ذوي الخبرةنحن نقدم دعم متعدد اللغات وخدمات RMA ولوجستيات استبدال سريعة لتقليل وقت التوقف.

- تأكد من أن فتحة PCIe توفر طاقة كافية (75W عبر فتحة ، لا حاجة إلى طاقة مساعدة للعمل القياسي).

- تحقق من الإفراج المادي: يناسب عامل شكل HHHL معظم خوادم 1U / 2U ؛ تتطلب متغيرات OCP فتحة وسطية متوافقة.

- لتنفيذ RoCE ، قم بتكوين DCB (تحكم تدفق الأولوية) و ECN على المفاتيح لـ Ethernet غير الخاسرة.

- دائماً تحديث البرمجيات الثابتة إلى أحدث إصدار مستقرة للاستفادة من تحسينات الأمان والأداء.

تأسست في عام 2008 ، شركة Hong Kong Starsurge Group Co. ، Limited هي مزود مدفوع بالتكنولوجيا لأجهزة الشبكة وخدمات تكنولوجيا المعلومات وحلول تكامل الأنظمة.نحن نخدم العملاء في جميع أنحاء العالم مع المنتجات بما في ذلك مفاتيح الشبكة، NICs، نقاط الوصول اللاسلكية، وحدات التحكم، الكابلات، ومعدات الشبكة. فريق المبيعات والفنية لدينا من ذوي الخبرة يدعم الصناعات مثل الحكومة، الرعاية الصحية، التصنيع، التعليم،التمويلمع نهج العميل أولاً، ستارسورج تركز على الجودة الموثوقة، الخدمة الاستجابة، والحلول المخصصةوبنية تحتية شبكة موثوق بها.

نحن نقدم حلول إنترنت الأشياء، وأنظمة إدارة الشبكات، وتطوير البرمجيات المخصصة، والدعم متعدد اللغات، والتسليم العالمي. اختر Starsurge كشريك موثوق به لحلول شبكات NVIDIA.