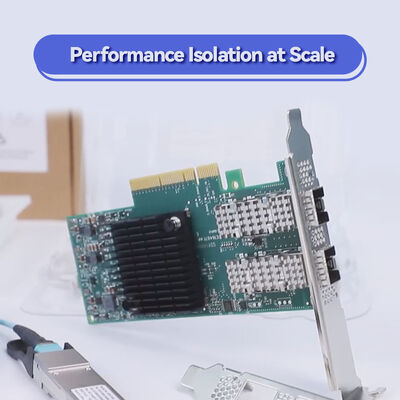

محول NVIDIA ConnectX-6 MCX653105A-HDAT بسرعة 200 جيجابت/ثانية بمنفذ واحد InfiniBand مع تشفير بالأجهزة و PCIe 4.0

تفاصيل المنتج:

| اسم العلامة التجارية: | Mellanox |

| رقم الموديل: | MCX653105A-HDAT |

| وثيقة: | connectx-6-infiniband.pdf |

شروط الدفع والشحن:

| الحد الأدنى لكمية: | 1 قطعة |

|---|---|

| الأسعار: | Negotiate |

| تفاصيل التغليف: | الصندوق الخارجي |

| وقت التسليم: | على أساس المخزون |

| شروط الدفع: | تي/تي |

| القدرة على العرض: | العرض بواسطة المشروع/الدفعة |

|

معلومات تفصيلية |

|||

| حالة المنتجات: | مخزون | طلب: | الخادم |

|---|---|---|---|

| حالة: | جديدة ومبتكرة | يكتب: | سلكي |

| السرعة القصوى: | ما يصل إلى 200 جيجابايت/ثانية | موصل Ethernet: | QSFP56 |

| نموذج: | MCX653105A-HDAT | ||

| إبراز: | محول NVIDIA ConnectX-6 Infiniband,بطاقة شبكة PCIe 4.0 بسرعة 200 جيجابت/ثانية,محول InfiniBand مع تشفير بالأجهزة,200Gb/s PCIe 4.0 network card,InfiniBand adapter with hardware encryption |

||

منتوج وصف

محول ذكي أحادي المنفذ بسرعة 200 جيجابت/ثانية HDR مع حوسبة داخل الشبكة وتشفير بالأجهزة

يقدم NVIDIA ConnectX-6 1 × QSFP56 إنتاجية كاملة تبلغ 200 جيجابت/ثانية على منفذ QSFP56 واحد، ويجمع بين زمن انتقال منخفض للغاية، وتفريغ الأجهزة، وتشفير XTS-AES على مستوى الكتلة. تم تصميم هذا المحول PCIe 4.0 x16 لمجموعات HPC و AI ومخازن NVMe-oF، ويقوم بتفريغ العمليات الجماعية و RDMA والتشفير من وحدة المعالجة المركزية، مما يزيد من أداء التطبيقات وقابليتها للتوسع في بيئات مراكز البيانات المتطلبة.

ينتمي 1 × QSFP56 إلى عائلة محولات InfiniBand NVIDIA ConnectX-6، المصممة للأداء الفائق في مراكز البيانات الحديثة. تدعم هذه البطاقة QSFP56 أحادية المنفذ ما يصل إلى PCIe 3.0/4.0 x16 (HDR InfiniBand أو 200GbE) مع تسريع كامل للأجهزة لـ RDMA والنقل الموثوق والحوسبة داخل الشبكة. من خلال دمج تفريغ العمليات الجماعية ومطابقة علامات MPI وتسريع NVMe عبر Fabrics، يقلل المحول بشكل كبير من حمل وحدة المعالجة المركزية مع تعزيز كفاءة الشبكة. يضمن تشفير الكتلة المدمج AES-XTS الخاص به أمان البيانات دون التأثير على الأداء، مما يجعله مثاليًا للخدمات المالية والأبحاث الحكومية ونشر السحابة واسعة النطاق.

تصل إلى 200 جيجابت/ثانية (HDR InfiniBand / 200GbE) على QSFP56 واحد

تصل إلى 215 مليون رسالة/ثانية

XTS-AES 256/512 بت على مستوى الكتلة، متوافق مع FIPS

تفريغ العمليات الجماعية، تفريغ هدف/بادئ NVMe-oF، مخزن مؤقت للانفجار

PCIe Gen 4.0 / 3.0 x16 (متوافق مع الإصدارات السابقة)

SR-IOV (1K VFs)، ASAP2، تفريغ Open vSwitch، أنفاق التراكب

RoCE، XRC، DCT، الترحيل عند الطلب، دعم GPUDirect RDMA

PCIe منخفض الارتفاع قائم بذاته، مع قوس طويل مثبت مسبقًا + قوس قصير متضمن

يدمج NVIDIA ConnectX-6 محركات تسريع الحوسبة داخل الشبكة التي تقوم بتفريغ عمليات مركز البيانات الهامة من وحدة المعالجة المركزية المضيفة. يدعم MCX653105A-HDAT النقل الموثوق القائم على الأجهزة والتوجيه التكيفي والتحكم في الازدحام، مما يضمن أداءً يمكن التنبؤ به في الشبكات واسعة النطاق. يتيح الوصول المباشر للذاكرة عن بُعد (RDMA) نقل البيانات بدون نسخ، متجاوزًا نواة نظام التشغيل. مع NVIDIA GPUDirect RDMA، تتواصل ذاكرة GPU مباشرة مع محول الشبكة، مما يقلل زمن الانتقال لتدريب الذكاء الاصطناعي ومحاكاة HPC. يضمن تشفير XTS-AES على مستوى الكتلة المدمج (مفتاح 256/512 بت) أمان البيانات أثناء النقل وفي حالة السكون بدون حمل على وحدة المعالجة المركزية، وتم تصميم المحول لتلبية متطلبات الامتثال لـ FIPS 140-2.

- الحوسبة عالية الأداء (HPC): محاكاة واسعة النطاق، وتنبؤات الطقس، وديناميكا الموائع الحسابية التي تتطلب اتصالاً بزمن انتقال منخفض يبلغ 200 جيجابت/ثانية.

- مجموعات الذكاء الاصطناعي والتعلم العميق: تدريب موزع مع GPUDirect RDMA، مما يزيد الإنتاجية بين عقد GPU.

- أنظمة تخزين NVMe-oF: تخزين مفكك عالي الأداء مع تفريغ كامل للهدف/البادئ، مما يقلل من استخدام وحدة المعالجة المركزية.

- مراكز بيانات السحابة واسعة النطاق: بيئات افتراضية مع SR-IOV وشبكات التراكب وتشفير مسرّع بالأجهزة.

- منصات التداول المالي: شبكات حتمية بزمن انتقال منخفض للغاية للتداول الخوارزمي.

يتوافق ConnectX-6 MCX653105A-HDAT بسلاسة مع مفاتيح InfiniBand NVIDIA Quantum (HDR 200 جيجابت/ثانية) ومفاتيح 200GbE القياسية، ومجموعة واسعة من منصات الخوادم. وهو يدعم أنظمة التشغيل الرئيسية وحزم المحاكاة الافتراضية، مما يضمن التكامل المرن في البنية التحتية الحالية.

| المعلمة | المواصفات |

|---|---|

| طراز المنتج | 1 × QSFP56 |

| معدل البيانات | 200 جيجابت/ثانية، 100 جيجابت/ثانية، 50 جيجابت/ثانية، 40 جيجابت/ثانية، 25 جيجابت/ثانية، 10 جيجابت/ثانية، 1 جيجابت/ثانية (InfiniBand و Ethernet) |

| المنافذ والموصل | 1 × QSFP56 (يدعم الكابلات النحاسية السلبية، والكابلات الضوئية النشطة، وكابلات AOC) |

| الميزات الرئيسية | PCIe Gen 4.0 x16 (متوافق أيضًا مع Gen 3.0، 2.0؛ يدعم تكوينات x8، x4، x2، x1) |

| زمن الانتقال | أقل من ميكرو ثانية (عادةً <0.7 ميكرو ثانية)معدل الرسائل |

| تصل إلى 215 مليون رسالة في الثانية | التشفير |

| تفريغ XTS-AES 256/512 بت بالأجهزة، جاهز لـ FIPS 140-2 | عامل الشكل |

| PCIe منخفض الارتفاع قائم بذاته (قوس طويل مثبت مسبقًا، قوس قصير كملحق متضمن) | الأبعاد (بدون قوس) |

| 167.65 مم × 68.90 مم | استهلاك الطاقة |

| 22 واط - 24 واط (يعتمد على استخدام الارتباط) | المحاكاة الافتراضية |

| SR-IOV (ما يصل إلى 1000 وظيفة افتراضية)، VMware NetQueue، NPAR، تفريغ تدفق ASAP2 | الإدارة والمراقبة |

| NC-SI، MCTP عبر PCIe/SMBus، PLDM (DSP0248، DSP0267)، I2C، SPI flash | التمهيد عن بُعد |

| InfiniBand، iSCSI، PXE، UEFI | أنظمة التشغيل |

| RHEL، SLES، Ubuntu، Windows Server، FreeBSD، VMware vSphere، OpenFabrics Enterprise Distribution (OFED)، WinOF-2 | دليل الاختيار - متغيرات محول ConnectX-6 |

| المنافذ | السرعة القصوى | واجهة المضيف | الميزات الرئيسية | MCX653105A-HDAT |

|---|---|---|---|---|

| 1 × QSFP56 | 100 جيجابت/ثانية | PCIe 3.0/4.0 x16 | عامل شكل OCP 3.0 صغير، منفذ مزدوج بسرعة 200 جيجابت/ثانية | MCX653106A-HDAT |

| 2 × QSFP56 | 200 جيجابت/ثانية | PCIe 3.0/4.0 x16 | عامل شكل OCP 3.0 صغير، منفذ مزدوج بسرعة 200 جيجابت/ثانية | MCX653105A-ECAT |

| 1 × QSFP56 | 100 جيجابت/ثانية | PCIe 3.0/4.0 x16 | عامل شكل OCP 3.0 صغير، منفذ مزدوج بسرعة 200 جيجابت/ثانية | MCX653106A-ECAT |

| 2 × QSFP56 | 200 جيجابت/ثانية | PCIe 3.0/4.0 x16 | عامل شكل OCP 3.0 صغير، منفذ مزدوج بسرعة 200 جيجابت/ثانية | MCX653436A-HDAT (OCP 3.0) |

| 2 × QSFP56 | 200 جيجابت/ثانية | PCIe 3.0/4.0 x16 | عامل شكل OCP 3.0 صغير، منفذ مزدوج بسرعة 200 جيجابت/ثانية | ملاحظة: يتضمن MCX653105A-HDAT محرك تشفير كامل بالأجهزة (XTS-AES) ويدعم بروتوكولات InfiniBand و Ethernet بسرعة تصل إلى 200 جيجابت/ثانية. للتكوينات ذات المنفذ المزدوج، ضع في اعتبارك متغيرات -HDAT مع قفصي QSFP56. |

- تصميم أحادي المنفذ يوفر أقصى إنتاجية لعقد الحوسبة حيث يتم إعطاء الأولوية للكثافة العالية لكل منفذ.أمان مدمج بالأجهزة:

- تشفير كتلة XTS-AES بدون حمل على وحدة المعالجة المركزية، يلبي الامتثال لـ FIPS للصناعات المنظمة.تخزين و AI مسرّع:

- تفريغ NVMe-oF و GPUDirect RDMA يعززان الأداء بشكل كبير لتدريب الذكاء الاصطناعي والتخزين المعرّف بالبرمجيات.PCIe 4.0 جاهز للمستقبل:

- يضاعف نطاق التردد التوصيلي إلى المضيف، مما يلغي الاختناقات لشبكات 200 جيجابت/ثانية.إدارة مبسطة:

- حزمة برامج تشغيل موحدة (OFED، WinOF-2) وتوافق واسع مع أنظمة التشغيل يقلل من تعقيد النشر.الخدمة والدعم

أسئلة متكررة

• بالنسبة للمنصات المبردة بالسائل، هذه البطاقة القياسية المبردة بالهواء غير متوافقة مع متغيرات الألواح الباردة؛ اتصل بـ Starsurge لاحتياجات SKU المبردة بالسائل.

• استخدم دائمًا كابلات أو وحدات مصنفة QSFP56 لتحقيق أداء 200 جيجابت/ثانية.

• قم بتأكيد توافق إصدار برنامج التشغيل مع نظام التشغيل والنواة الخاص بك قبل النشر.

حول مجموعة Hong Kong Starsurge

تسليم عالمي · دعم متعدد اللغات · خدمات OEM وتكامل مخصصة

حقائق رئيسية في لمحة

| حالة الدعم | ملاحظات | مفاتيح InfiniBand NVIDIA Quantum HDR |

|---|---|---|

| محول ConnectX-6 مزدوج المنفذ بسرعة 200 جيجابت/ثانية (MCX653106A-HDAT) | شبكة 200 جيجابت/ثانية، توجيه تكيفي | مفاتيح 200GbE (IEEE 802.3) |

| ✓ متوافق | يتطلب أوضاع FEC وفقًا لمواصفات المفتاح | GPU Direct RDMA |

| ✓ نعم | سلسلة GPU من NVIDIA (Volta، Ampere، Hopper، إلخ.) | VMware vSphere 7.0/8.0 |

| ✓ معتمد | برامج تشغيل أصلية، دعم SR-IOV | Linux (RHEL، Ubuntu، SLES) |

| ✓ دعم كامل | MLNX_OFED، برامج تشغيل مضمنة متاحة | Windows Server 2019/2022 |

| ✓ مدعوم | حزمة برامج تشغيل WinOF-2 | قائمة تدقيق المشتري - قبل طلب MCX653105A-HDAT |

- [ ] التحقق من فتحة PCIe للخادم: فتحة مادية x16، يوصى بالجيل 4 لأداء 200 جيجابت/ثانية كامل.

- [ ] اختر كابلات أو أجهزة إرسال واستقبال QSFP56 المناسبة (نحاس سلبي حتى 5 أمتار، AOC، أو بصريات).

- [ ] تحقق من دعم برنامج تشغيل نظام التشغيل (إصدار OFED أو مضمن).

- [ ] تأكد من تلبية متطلبات الامتثال للتشفير (XTS-AES، FIPS).

- [ ] تقييم التبريد البيئي: قد تتطلب المحولات عالية السرعة تدفق هواء موجه.

- منتجات ذات صلة