NVIDIA ConnectX-6 MCX653106A-HDAT 200Gb / s محول ذكي ذو منفذين InfiniBand

تفاصيل المنتج:

| اسم العلامة التجارية: | Mellanox |

| رقم الموديل: | MCX653106A-HDAT-SP |

| وثيقة: | connectx-6-infiniband.pdf |

شروط الدفع والشحن:

| الحد الأدنى لكمية: | 1 قطعة |

|---|---|

| الأسعار: | Negotiate |

| تفاصيل التغليف: | الصندوق الخارجي |

| وقت التسليم: | على أساس المخزون |

| شروط الدفع: | تي/تي |

| القدرة على العرض: | العرض بواسطة المشروع/الدفعة |

|

معلومات تفصيلية |

|||

| حالة المنتجات: | مخزون | طلب: | الخادم |

|---|---|---|---|

| حالة: | جديدة ومبتكرة | يكتب: | سلكي |

| السرعة القصوى: | ما يصل إلى 200 جيجابايت/ثانية | موصل Ethernet: | QSFP56 |

| نموذج: | MCX653106A-HDAT | اسم: | MCX653106A-HDAT-SP Mellanox Network Card 200gbe خزنة ذكية عالية السرعة |

| إبراز: | محول NVIDIA ConnectX-6 Infiniband,بطاقة شبكة Mellanox بسرعة 200 جيجابت في الثانية,محول ذكي InfiniBand مزدوج المنفذ,Mellanox 200Gb/s network card,dual-port InfiniBand smart adapter |

||

منتوج وصف

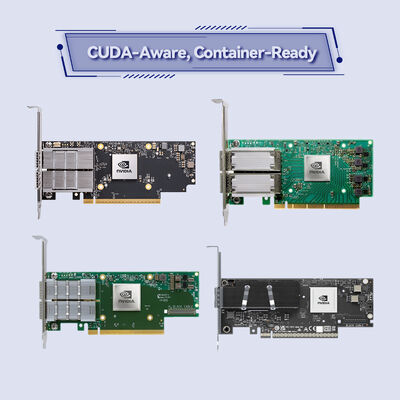

NVIDIA ConnectX-6 MCX653106A-HDAT

محول ذكي مزدوج المنفذ HDR InfiniBand بسرعة 200 جيجابت/ثانية

أطلق العنان لأداء الحوسبة عالية الأداء (HPC) والذكاء الاصطناعي (AI) المتطرف مع تقنية NVIDIA In-Network Computing. يوفر محول PCIe 4.0 x16 هذا 215 مليون رسالة/ثانية وتسريعًا قائمًا على الأجهزة لمراكز البيانات الأكثر تطلبًا.

نظرة عامة على المنتج

NVIDIA ConnectX-6 MCX653106A-HDAT هو محول ذكي مزدوج المنفذ InfiniBand و Ethernet بسرعة 200 جيجابت/ثانية، مصمم ليكون حجر الزاوية في منصة NVIDIA Quantum InfiniBand. يدمج ميزات متقدمة مثل الوصول المباشر للذاكرة عن بُعد (RDMA)، وتفريغ NVMe عبر الشبكات (NVMe-oF)، وتشفير مستوى الكتلة لتقليل الحمل على وحدة المعالجة المركزية بشكل كبير. من خلال نقل الحوسبة إلى نسيج الشبكة، يعزز هذا المحول قابلية التوسع والكفاءة للحوسبة عالية الأداء، وأعباء عمل التعلم الآلي، والبنى التحتية السحابية واسعة النطاق.

الميزات الرئيسية

- إنتاجية فائقة: اتصال بسرعة 200 جيجابت/ثانية لكل منفذ مع عرض نطاق ترددي إجمالي أقصى يبلغ 200 جيجابت/ثانية.

- الحوسبة داخل الشبكة: تفريغ الأجهزة للعمليات الجماعية، ومطابقة علامات MPI، وبروتوكول الاستئناف.

- تشفير مستوى الكتلة: تشفير أجهزة XTS-AES 256/512 بت لأمان البيانات المتوافق مع FIPS.

- دعم PCIe 4.0: معدل ارتباط 16 جيجابت/ثانية مع توافق كامل مع الإصدارات السابقة مع PCIe 3.0/2.0/1.1.

- معدل الرسائل: ما يصل إلى 215 مليون رسالة في الثانية لأداء الحزم الصغيرة المتطرف.

- تفريغ التخزين: تفريغ NVMe-oF (الهدف والمُبادِر)، T10-DIF، ودعم SRP، iSER، NFS RDMA.

- المحاكاة الافتراضية: SR-IOV مع ما يصل إلى 1000 وظيفة افتراضية و ASAP2 لتفريغ OVS.

تقنية NVIDIA In-Network Computing

يُدمج ConnectX-6 محركات الحوسبة داخل الشبكة الفريدة من NVIDIA، مما يُفرغ عمليات الاتصال الجماعي (مثل MPI all-reduce) من وحدة المعالجة المركزية إلى نسيج الشبكة. هذا يقلل بشكل كبير من زمن الاستجابة ويحرر دورات وحدة المعالجة المركزية لمعالجة التطبيقات. بالاقتران مع RDMA ورسم خرائط الذاكرة المتقدمة (UMR)، يُمكّن المحول من استخدام GPUDirect RDMA واتصال GPU من نظير إلى نظير عبر الشبكة، مما يُسرّع مجموعات تدريب الذكاء الاصطناعي والمحاكاة المعقدة.

عمليات النشر النموذجية

- الحوسبة عالية الأداء (HPC): مجموعات واسعة النطاق تشغل محاكاة الطقس، وديناميكا الموائع الحسابية، وديناميكا الجزيئات.

- الذكاء الاصطناعي والتعلم الآلي: التدريب الموزع للشبكات العصبية العميقة التي تتطلب إنتاجية عالية وزمن استجابة منخفض.

- مراكز بيانات المؤسسات: أهداف تخزين NVMe-oF، وتسريع قواعد البيانات، والبنية التحتية الافتراضية.

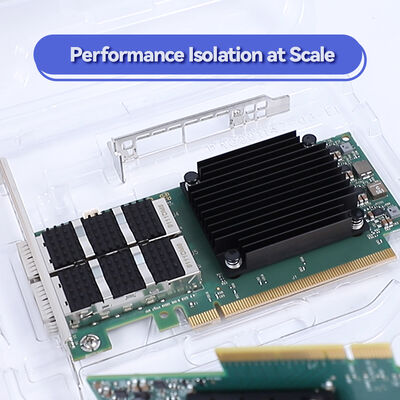

- السحابة واسعة النطاق: بيئات متعددة المستأجرين تتطلب عزلًا قائمًا على الأجهزة وجودة خدمة (QoS).

- المنصات المبردة بالسائل: متوافق مع تصميمات لوحات التبريد Intel Server System D50TNP للنشر عالي الكثافة.

التوافق

النظام ووحدة المعالجة المركزية: منصات x86، و Power، و Arm، و GPU (مع GPUDirect)، و FPGA.

المحولات: قابلة للتشغيل المتبادل بالكامل مع محولات NVIDIA Quantum InfiniBand حتى 200 جيجابت/ثانية ومحولات Ethernet القياسية.

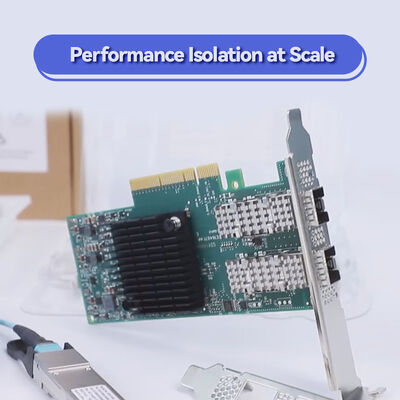

الكابلات: كابلات نحاسية سلبية، وكابلات بصرية نشطة، وكابلات DAC مع موصلات QSFP56.

المواصفات الفنية

| المعلمة | التفاصيل |

|---|---|

| اسم المنتج | NVIDIA ConnectX-6 MCX653106A-HDAT |

| السرعات المدعومة | InfiniBand: 200/100/50/40/25/10/1 جيجابت/ثانية؛ Ethernet: 200/100/50/40/25/10/1 جيجابت إيثرنت |

| منافذ الشبكة | 2x QSFP56 |

| واجهة المضيف | PCIe Gen 4.0/3.0 x16 (يدعم أيضًا x8، x4، x2، x1) |

| معدل الرسائل | ما يصل إلى 215 مليون رسالة/ثانية |

| ميزات InfiniBand | RDMA، XRC، DCT، ODP، تحكم في الازدحام بالأجهزة، 16 مليون قناة إدخال/إخراج، 8 VLs + VL15 |

| تفريغات Ethernet | RoCE، LSO/LRO، تفريغ المجموع الاختباري، RSS/TSS، تفريغ VXLAN/NVGRE/Geneve |

| تفريغات التخزين | NVMe-oF (هدف/مُبادِر)، T10-DIF، SRP، iSER، SMB Direct |

| الأمان | تشفير كتلة XTS-AES 256/512 بت بالأجهزة، متوافق مع FIPS |

| الإدارة | NC-SI، MCTP عبر SMBus/PCIe، PLDM للمراقبة/البرامج الثابتة، I2C، JTAG |

| الأبعاد | 167.65 مم × 68.90 مم (بدون أقواس) |

| التنظيمية | متوافق مع RoHS، ODCC |

ملاحظة: المواصفات تستند إلى الوثائق المتاحة. للحصول على التفاصيل الكاملة، يرجى التأكيد قبل الطلب.

دليل الاختيار: MCX653106A-HDAT

هذا الطراز هو الإصدار مزدوج المنفذ QSFP56 في عامل شكل PCIe Stand-up. يدعم كل من InfiniBand و Ethernet بسرعات تصل إلى 200 جيجابت/ثانية. للاحتياجات ذات المنفذ الواحد، ضع في اعتبارك MCX653105A-HDAT. بالنسبة لعامل شكل OCP 3.0، راجع سلسلة MCX653436A-HDAT.

| المنافذ | عامل الشكل | OPN | حالة الاستخدام |

|---|---|---|---|

| 2x QSFP56 | PCIe Stand-up | MCX653106A-HDAT | 200 جيجابت/ثانية مزدوج المنفذ لعقد HPC/AI عالية التوفر |

| 1x QSFP56 | PCIe Stand-up | MCX653105A-HDAT | 200 جيجابت/ثانية أحادي المنفذ للحوسبة القياسية |

| 2x QSFP56 | Socket Direct | MCX654106A-HCAT | تحسين الخادم متعدد المقابس |

مزايا ConnectX-6 MCX653106A-HDAT

- إدخال/إخراج مستقبلي: يضمن استعداد PCIe 4.0 عرض النطاق الترددي لوحدات المعالجة المركزية ووحدات معالجة الرسومات من الجيل التالي.

- الأمان افتراضيًا: يلغي التشفير المدمج المتوافق مع FIPS الحاجة إلى محركات أقراص ذاتية التشفير.

- توحيد البنية التحتية: محول واحد يدعم كل من InfiniBand و Ethernet، مما يبسط المخزون.

- تخزين قابل للتوسع: تقلل تفريغات NVMe-oF الكاملة من حمل وحدة المعالجة المركزية في معماريات التخزين المفككة.

الخدمة والدعم

بدعم من فريق Hong Kong Starsurge Group الفني ذي الخبرة، نقدم:

- مساعدة في تكوين ما قبل البيع لبيئة HPC أو المؤسسة الخاصة بك.

- شحن عالمي مع تتبع وتعبئة آمنة.

- إرشادات تحديث البرامج الثابتة ودعم تنزيل برامج التشغيل.

- خدمات الضمان وإعادة المواد (قد تختلف الشروط حسب المنطقة).

أسئلة متكررة

س: هل هذه البطاقة متوافقة مع محولات Ethernet القياسية؟

ج: نعم، يدعم MCX653106A-HDAT كل من InfiniBand و Ethernet. يمكن أن تعمل بسرعة 200/100/50/40/25/10/1 جيجابت إيثرنت.

س: هل تدعم NVIDIA GPUDirect؟

ج: بالتأكيد. تدعم GPUDirect RDMA و PeerDirect لاتصال GPU إلى GPU المباشر عبر الشبكة.

س: ما هو الفرق بين MCX653106A-HDAT و MCX653106A-ECAT؟

ج: يشير اللاحقة -HDAT إلى الإصدار عالي السرعة الذي يدعم 200 جيجابت/ثانية، بينما يشير -ECAT عادةً إلى إصدار أبطأ (100 جيجابت/ثانية). تحقق دائمًا من دليل الطلب.

س: هل يمكنني استخدام هذه البطاقة في فتحة PCIe Gen 3؟

ج: نعم، إنها متوافقة مع الإصدارات السابقة مع PCIe Gen 3.0، ولكن سيتم تقييد عرض النطاق الترددي إلى حوالي 100 جيجابت/ثانية لكل منفذ بسبب الناقل الأبطأ.

احتياطات التثبيت

- تأكد من وجود تدفق هواء كافٍ؛ قد يتطلب المحول تبريدًا نشطًا في البيئات الكثيفة.

- استخدم فقط وحدات وكابلات QSFP56 التي تم التحقق منها للتشغيل بسرعة 200 جيجابت/ثانية لتجنب عدم استقرار الارتباط.

- تحقق من دعم تقسيم فتحات PCIe في اللوحة الأم الخاصة بك إذا كنت تستخدم إصدار Socket Direct.

- تأكيد ميزانية الطاقة: تسحب البطاقة الطاقة من فتحة PCIe؛ قد تحتاج وحدات بصرية عالية الطاقة إلى اعتبار إضافي للطاقة.

حول شركة Hong Kong Starsurge Group Co., Limited

تأسست في عام 2008، Hong Kong Starsurge Group هي مزود مدفوع بالتكنولوجيا لأجهزة الشبكات وخدمات تكنولوجيا المعلومات وحلول تكامل الأنظمة. نخدم العملاء في جميع أنحاء العالم بمنتجات تشمل محولات الشبكة، وبطاقات واجهة الشبكة (NICs)، ونقاط الوصول اللاسلكية، ووحدات التحكم، ومعدات الشبكات ذات الصلة. يدعم فريق المبيعات والدعم الفني ذو الخبرة لدينا صناعات مثل الحكومة والرعاية الصحية والتصنيع والتعليم والتمويل والمؤسسات. من خلال نهج يركز على العملاء، تركز Starsurge على الجودة الموثوقة والخدمة سريعة الاستجابة والحلول المخصصة التي تساعد العملاء على بناء بنية تحتية شبكية فعالة وقابلة للتطوير وموثوقة. نقدم حلول إنترنت الأشياء (IoT)، وأنظمة إدارة الشبكات، وتطوير البرامج المخصصة، والدعم متعدد اللغات، والتسليم العالمي.

حقائق رئيسية في لمحة

مصفوفة التوافق

| المكون | مدعوم؟ | ملاحظات |

|---|---|---|

| محولات NVIDIA Quantum | نعم | تفاعل كامل بسرعة 200 جيجابت/ثانية HDR |

| اللوحات الأم PCIe Gen 4.0 | نعم | معدل خط كامل بسرعة 200 جيجابت/ثانية |

| اللوحات الأم PCIe Gen 3.0 | نعم | محدود بحوالي 100 جيجابت/ثانية لكل منفذ |

| VMware vSphere | نعم | برامج التشغيل متاحة |

| Intel D50TNP المبرد بالسائل | SKU خاص | يوجد إصدار لوحة تبريد؛ تأكيد OPN |

قائمة تدقيق المشتري

- تأكد من أن الخادم يحتوي على فتحة PCIe 4.0 x16 مجانية (مادية وكهربائية).

- تحقق من سرعة نسيج InfiniBand: هذه البطاقة قادرة على HDR (200 جيجابت/ثانية).

- اختر وحدات QSFP56 المناسبة (SR، LR، أو DAC) للمسافة الخاصة بك.

- تأكد من كفاية الطاقة والتبريد للتشغيل عالي السرعة.

- تحقق من دعم نظام التشغيل/برامج التشغيل: RHEL، Ubuntu، Windows Server، إلخ.